Containers allow you to pack more computing workloads onto one server and they improve capacity for new tasks on computer extremely fast.

There is a difference between containers and virtual machines. The main difference is that Linux gives each application running on a server its own, but those containers all share the host server’s operating system. The containers do not need to start up an operating system, and that is why they are much quicker, precisely they create containers in a second. On the other hand, if you use virtual machines, they need a couple of minutes for the same procedure.

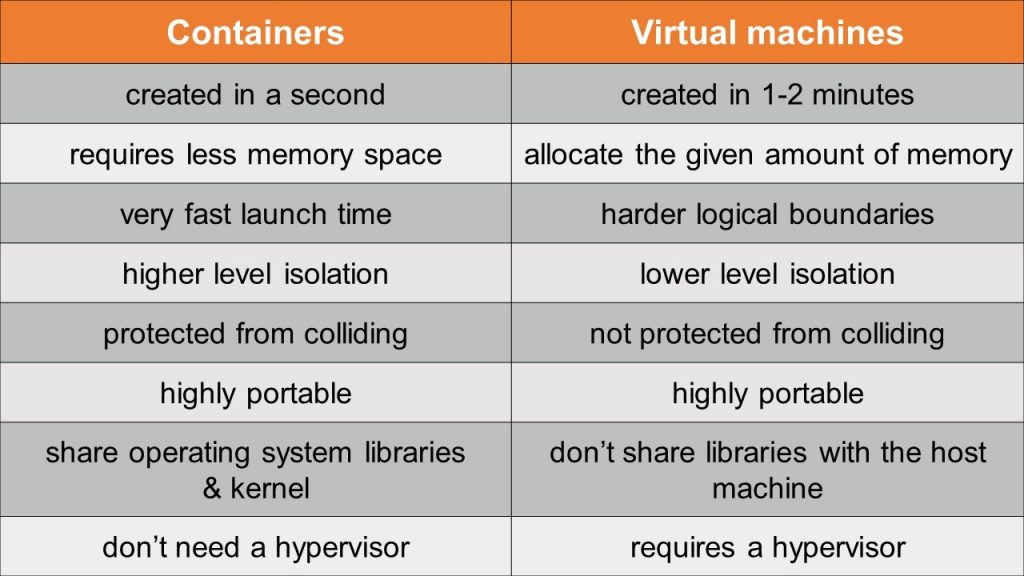

The following list will explain the comparison between the containers and virtual machines:

Containers have proven themselves on a massive scale such as in Google search. Google Search is the world’s biggest implementer of Linux containers, which the company uses for internal operations. Google also is expert at hosting containers in its App Engine or Compute Engine services, but like other cloud suppliers, it puts containers from different customers into separate KVM virtual machines, because of the clearer boundaries between VMs. Google is launching about 7,000 containers every second, which amounts to about 2 billion every week. Containers are one of the secrets to the speed and smooth operation of the Google Search engine, which makes containers become more applicable.

Hundreds or even thousands of containers can be loaded on a host, so that it way IT specialists call them “lightweight”.

Docker is a company that came up with a standard way to build out a container workload so that it could be moved around and still run in a predictable way in any container-ready environment, but it is not the only provider. Jails and Zones were the first containers, but they are limited to the use of Solaris and FreeBSD operating systems. When developers and Google created Linux Control Groups successfully and got container functionality included in the Linux Kernel, containers were instantly within reach of every business and government data centre.

Containers can speed updates and save IT work. They are built in layers that can be accessed independently of one another. At the same time, big applications can be broken down into many small ones, each in its own container. These services can be updated more often and they are safer than updating the large ones. Containers still deal with unresolved problems – What if we need the application on 10 or 1000 different servers? That becomes a problem. Docker still wants to create an application that would be running on one computer, but big companies want to know a tool can handle a massive scale. Google solves this problem by creating its own multi-container, management system which creates the open-source Kubernetes Project.

Those who are in constant pursuit of better, faster, and cheaper computing see a lot to like in containers. The CPU of an old-style, single application x86 server is utilized somewhere around 10% to 15%, at best. Virtualized servers push that utilization up to 40%, 50%, or in rare cases 60%.

Containers hold the promise of using an ever-higher percentage of the CPU, lowering the cost of computing and getting more bang for the hardware, power, and real estate investing. Containers also hold out the threat that if something goes wrong — like a security breach — it may go wrong in a much bigger way. That’s why there’s no rush to adopt containers everywhere. Containers are still things to use, but use with caution, and to proceed with deliberate, not breakneck, speed.

What to find out more about containers? Having difficulties to configure them? SuperAdmins DevOps team could consult and help with any related question about containers.